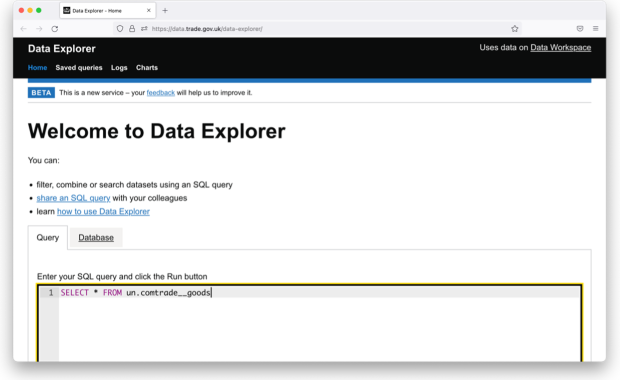

At the Department for International Trade (DIT), we extensively use Structured Query Language (SQL), especially in the Digital, Data and Technology (DDaT) team. As discussed in our previous blog post on SQL, and in accordance with our data strategy, we created Data Workspace. Data Workspace is a platform where DIT staff can use SQL to access and analyse data. This data is collected from a wide variety of both internal and external sources to help us make data-driven decisions.

We were asked to share a subset of our data with the Prime Minister’s Office at Number 10 to help them make data-driven decisions. Our use of SQL, our infrastructure and Number 10’s infrastructure meant we were able to quickly and easily set up a system to regularly do this.

Iterating quickly on what data to send

It was straightforward for our domain experts at DIT to explore our data because we offer interfaces to use SQL in Data Workspace. We were able to construct and refine a query to extract only what Number 10 needed. Using SQL also offered a separation between the data and the query. Once the query was constructed, it could be easily re-run every time there was a data update.

Sending up-to-date data

Our data ingestion infrastructure is based on Apache Airflow. This is an industry standard platform for running and monitoring potentially complex automated workflows, running in Amazon Web Services (AWS). We already use it to run SQL-based transformations for data inside Data Workspace. It was easy to use these pieces to regularly run the SQL query constructed by our domain experts against our data and send the results to Number 10.

Receiving the data

Number 10 have previously created rAPId: an easy-to-use application programming interface (API) for receiving and storing data. It was straightforward for our Apache Airflow instance to regularly run the SQL against our data and send the results to Number 10’s rAPId instance.

"DIT’s developed digital infrastructure is enabling us to create secure API data sharing interfaces, matching the rising demand for strong evidence in the design, delivery and evaluation of public policy.”

Cameron Chakraverty, Data Scientist, 10 Downing Street

Analysis rather than sourcing

The data we sent was used to provide the Prime Minister and their team with up-to-date information on foreign investment into net zero at a regional level. This allowed them to make informed decisions that shaped net zero investment policy and aligned with the government's Levelling Up ambition.

Cross-government collaboration

That’s it! Investment in our infrastructure meant that the process was straightforward. We and Number 10 had all the pieces, and all it took was wiring them together. Being a collaborative partner is one of DDaT’s core values, and this is just one of many examples of how we support other government departments.

In DDaT, we are faced with a wide range of technical and user interface challenges. It’s not just shuffling data from one place to another. We need to make sure that this data is accessible, and accessible to a wide range of our users.

As in this project, we support expert Data Scientists constructing machine learning models on large amounts of data using a combination of SQL, Python and R. We also support less technical users that need to analyse smaller amounts of data via web-based point and click interfaces, or in Excel.

We’re constantly running user research to make sure we’re doing the right thing in this reasonably complex space. All of this is done while keeping an eye on security, balancing ease of use against data-related risks.

If working in a team facilitating data-driven policy decisions at the highest UK office sounds exciting, maybe DIT DDaT is the place for you.

Learn more about DDaT in our other blogs.

Feeling inspired to join our team? Check out our latest job roles on our DDaT careers page.